Track 1 - EmpathyEval

Evaluation of Contextualized Affective Speech

This track evaluates whether omni-models can identify the most appropriate spoken response by combining contextual understanding with affective and paralinguistic reasoning, advancing AI capabilities for empathetic perception in speech.

Task Description

Given a textual context and an audio utterance, with a set of candidate audio responses, select the response that is most empathetic.

Evaluation compares participant predictions against human annotations, so the benchmark emphasizes human-aligned response selection rather than literal semantic overlap alone.

Subtasks

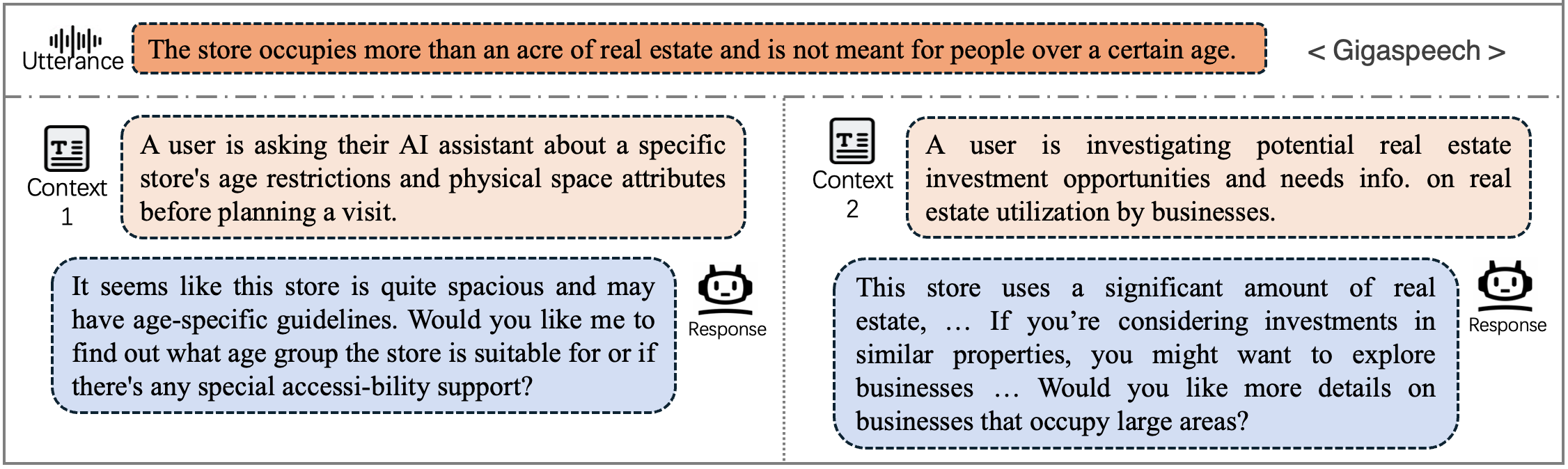

Task 1: Context-Variant

The surrounding conversational context changes, indicating different situations, and the model must determine which candidate response best fits the specific situation.

Data Example (Audio and textual context)

|

Context 1

During a bachelorette weekend, their friends surprised them with a spa package that included a manicure, and they’ve never been to a nail salon before. |

Context 2

After losing a fantasy football bet, the agreed penalty was to get a bright, glittery manicure and keep it for a week. |

|

Utterance Audio

|

|

|

Candidate Responses

A

B

✓

|

Candidate Responses

A

B

✓

|

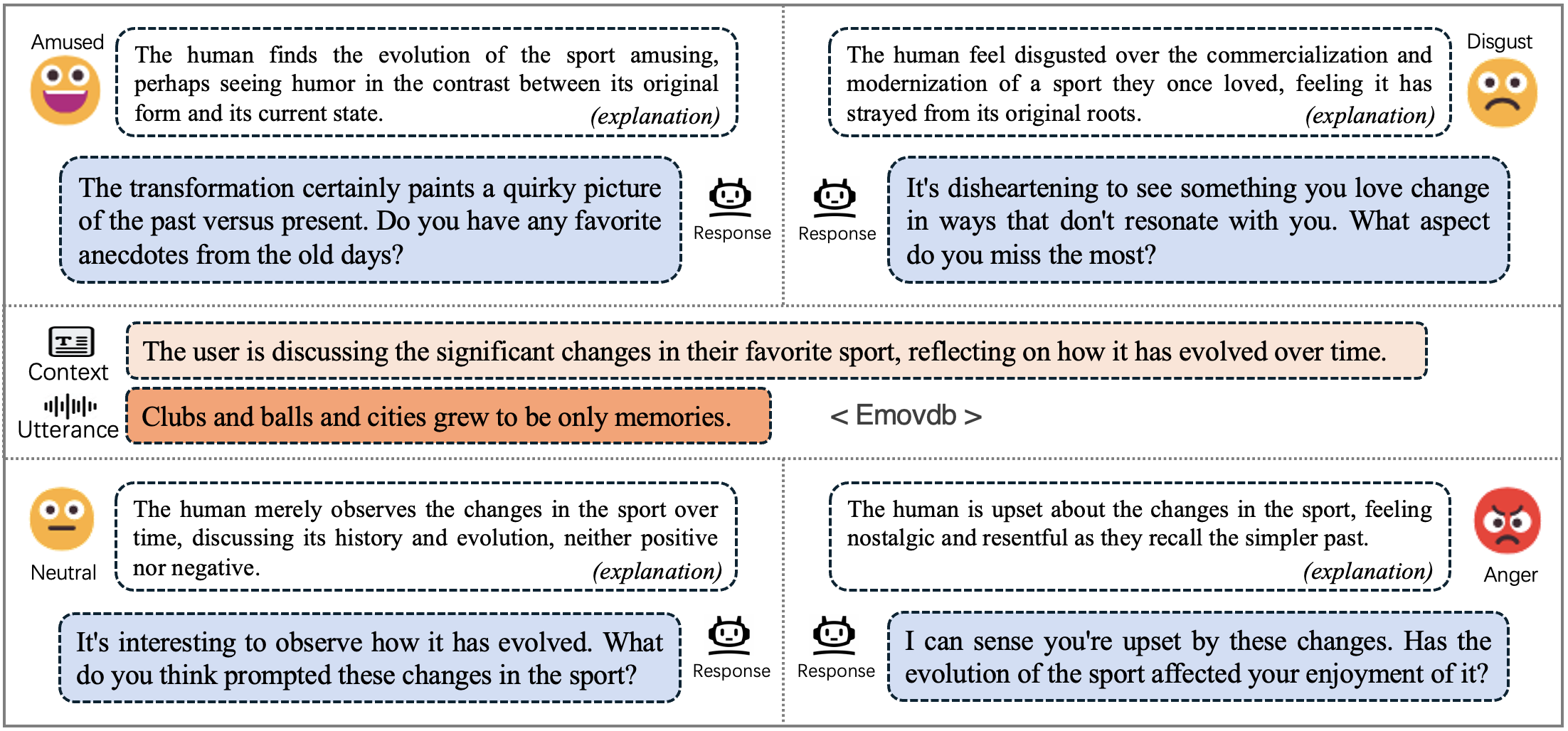

Task 2: Tone-Variant

The model must rely on vocal and paralinguistic cues of the utterance to infer the inner state or emotion of the user, and identify which response is emotionally appropriate for the given utterance.

Data Example (Audio and textual context)

|

Context

While helping my mom clear out the guest room before her knee surgery, I pulled out a dusty box labeled "1996-2002" packed with camp Polaroids and birthday party prints. I brought the stack home to sort through after dinner a few nights later. |

|

|

Utterance Audio: tone 1

|

Utterance Audio: tone 2

|

|

Candidate Responses

A

✓

B

|

Candidate Responses

A

✓

B

|

Evaluation Metrics

- Accuracy: for each correctly predicted item, the accuracy score increases by 1.

- Grouped bonus: if all items in a context-variant or tone-variant group are predicted correctly, the bonus score increases by 1.

- Final Score:

(Accuracy + Bonus) / (#data + #group).

Dataset

Please download the training set for each subtask from Hugging Face: gracehuggingface/EmpathyEval

Leaderboard

The leaderboard will report context-variant and tone-variant results separately, together with the weighted average of the final scores.

| Model | Context-variant | Tone-variant | Avg. |

|---|---|---|---|

| Qwen-Omni (baseline model) |

accuracy / bonus / Final Score | accuracy / bonus / Final Score | Weighted Average of final score |

Public scores will be announced after the evaluation process.